I am working on a

model to represent content in a manner free of words. There are two other main parts tightly related to it: To recognize content by a variable set of given features. And, to reorder the stored content so that implicitly represented content becomes explicit while the already explicit part becomes more straight at the same time.

There is one main thing I avoided during my approach: black boxes. Things that base on people's believes but on proven facts. Things that are overly complex, hence may be estimated only, but not proven.

I avoided two common approaches: utilizing artificial intelligence and linguistics.

Representing The Content

On dealing with thesauri and classifications, I noticed the fact that those ontologies force abstraction relationships between mentioned notions. Therefore, I thought about the alternative, to force the partial relationship. What would result, if all the notions had to be represented as partial relationships? -- A heavily wired graph. -- Originally, thesauri and classifications were fostered manually. Therefore, it looked clear to me, noone would like to foster a densely wired graph to keep track of notions.

Nevertheless, I continued the quest. There is software available, nowadays, hence why to keep the chance out of mind?

Over time, I came to the insight, that notions might be constituted by sets of features, mainly. There -- I had "words" represented. More precisely: the items which are treated as the "templates" for the notions. ... I begun to develop an algorithm to recognize items by their features, varying sets of features, effectively getting rid of the need for globally unique identifier numbers, "IDs" for short. I had a peer to peer network in mind, which automatically should exchange item data sets. That demanded for a way

not to identify but at least to

recognize items by their features.

Since items can be part of other items -- like a wheel can be part of a car, and a car a part of a car transporter --, I switched from sets to graphs. -- To make clear that the edges used by my model are not simple graph edges, but also to differentiate them from classification/thesauri relationships, I call them "

connections".

Then I noticed similarities to neurological structures, mainly neurons, axons, dendrites. Also, I noticed, the yet developed tools could not represent a simple "not", e.g. "a not red car", I begun to experiment with meaning modifying edges. Keeping in mind, that there is probably no magical higher instance providing the neurons with knowledge -- as for example knowledge about that one item is the opposite of another --, I kept away from connections injecting new content/knowledge; in this case knowledge about how to interpret an antonym relationship. I strove for the smallest set of variations of connections.

However, even without content modifying connections, the tag phenomenon common to the web could take great benefit: The connections between the items make clear which item is implication of which other(s). Applied to tagging, users would not need to mention the implications anymore: The implications would be available by the underlaying ontology. (And the ontology could be enhanced by a central site, by individual users, or by a peer to peer network of users.)

Recognizing The Content

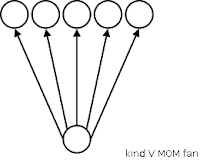

Having that peer to peer network in mind, I needed a way to identify items stored at untrusted remote hosts. I noticed, that collecting sets of features together which theirselves would be considered to be items, meant nothing but a definition of the items by their features. Different peers -- precisely: users of the peer software -- might define the same items by different features. Which might leave only some of the features locally known/set to match those remotely set. -- However, most time that's enough to recognize an item by its features: Some features point to a single item only. These features are valuable: They are specific for that very item. If one of these specific features is given, most probably the item, the feature is pointing to, is meant.

But usually, every feature points to multiple items at once. Most probably, every item a feature is pointing to is chosen reasonably, i.e. the item the feature is pointing to is neither randomly chosen nor complete trash. Thus, a simple count might be enough: How many given features point to which items? How great is the quota of features pointing to a particular item, compared to the number of total incoming feature connections? -- The number of incoming feature connections, I call stimulations.

There's one condition applied to the recognition: If one node gets a particular number of stimulations, e.g. two stimulations, that very node will be considered to be "active" itself, hence stimulating its successor nodes as well. For a basic implementation of recognition, this approach is enough. A more sophisticated kind of recognition also considers nodes stimulated only, but not activated.

However, having recognition at hand -- even at this most basic level -- would finally support the above aproach of tagging to leave alone the implications: Given only a handful of features would be enough to determine the item meant. Also, applied to online search, the search engine could determine the

x most probably meant items and include them into the search.

Despite these, I see one big chance: Currently, if a person gets a physical item the individual does not know and cannot identify, she or he needs to let it recognize by someone else. Usually your vendor. Where you have to move the part to. Some parts are heavy, others cannot be moved simply. And you are hindered using common tools to identify the object: Search engines don't operate on visual appearance, and information science tools like thesauri and classifications fail completely, simple because they prefer abstraction relationships over partial ones.

Using software able to recognize items by features would overcome such issues, completely, and independently of the

kind of feature: It would be equal whether the feature would be a word, i.e. name, label, or color, shape, taste, smell or other. And, other but relational databases, there were no need for another table dimension for each feature -- just a unified method to attach new features to items.

Reorganizing The Content

Also directly related to that peer to peer network in mind, peers exchanging item data sets -- e.g. nodes plus context (connections and attached neighbor nodes) -- could result in heavy wiring, superfluous chains of single-feature-single-item definitions, and lots of unidentified implicit item definitions. That needs to be avoided.

Since the chains oftenly just can be cut down, I concentrated on the cases of unidentified implicit definitions. For simplicity, I imagined a set of features pointing to sets of items. Some of the features might point to the same items in common, e.g. the features <four legs>, <body>, <head>, and <tail> in common would point to the items <cat>, <dog>, and <elephant>. You might notice, that <cat>, <dog>, and <elephant>, all are animals, and also all these animals feature a body, four legs, head, and tail. Thus, <animal> is one implication of this set of features and items. The implication is not mentioned explicitely, but it's there.

Consequently, the whole partial network could be replaced by another one, mentioning the <animal> node as well: <four legs>, <body>, <head>, and <tail> would become features of the new <animal> node, and <animal> itself would become common feature of <cat>, <dog>, and <elephant>.

By that, the network would become more straight (since the number of connections needed would reduced from

number of features *

number of items to only

number of features +

number of items), hence also more lightweight. Also, items implied would become visible.

While this approach makes the implications visible, it opens two new doors: One, the identified implications cannot be named without the help of a human -- at least not easily. (Recognition could do a honour, but I skip that here.) The second issue is, that the newly introduced node ("<animal>") conflicts with recognition: For example: If there was a another node, e.g. <meows>, directly pointing to the <cat> node, after the reorganization, a given set of <meows> and <head>, only, would not result in <cat> anymore since each of the given features would, yes,

stimulate their successor nodes, but not activate. -- To actively

receive stimulations from predecessor nodes could be a solution, but I am not yet quite sure. As mentioned initially, this is a work in progress.

However, reorganization would automate identification of implications. People could provide labels for the added implication nodes. -- Which induces another effect of the model.

Overcome the Language Barrier

I mentioned that I kept away from connections injecting knowledge unreachable to the software. That's not all. I strive to completely avoid any kind of content unreachable, needing any external mind/knowledge storage which would provide interpretation of such injected content/knowledge. Hence, I also avoided to operate on a basis of labels for the items.

Instead, all the items and the features (whereby "features" is just another name for items located a level below the level of items initially considered) get identified by [locally only] unique IDs. The names of the items I'd store somewhere else, so that item names in multiple languages could point to the very item. That helps in localization of the system, but also, it opens the chance to overcome an issue dictionaries cannot manage: There are languages that do not provide an immediate translation for a given word -- because the underlaying concept is different. The English "to round out" term and the German "abrunden" is such an example: In fact, the German variant considers an external point of view ("to round by taking something away"), while the English obviously takes an internal one.

Not sticking on labels but on items/notions, the model features a chance to label the appropriate, maybe even slightly dismatching, nodes: The need to label exactly the same -- but probably not exactly matching -- node is gone. -- In a word: I think, in different cultures many notions differ slightly from similar ones of other cultures, but each culture labels only their own notions, ignoring slightly different points of view of other cultures. -- This notions/labeling issue, I imagine as

puddles: Each culture has its own puddles for each of its notions. From the point of view of different languages, some puddles match greatly, maybe even totally, but some feature no intersection at all. Those are the terms having no counterpart in the other language.

In the long term, I consider the approach to build upon notions/items -- but on words -- as a real chance to overcome the language barrier.

Conclusion

Despite the option of dropping the custom to label different items by the same name (as thesauri tend to do to reduce foster effort) and the possible long-term chance of overcoming the language barrier, I see three main benefits for the web, mainly for tagging and online search:

- Tagging could be reduced to the core tags; all implications could be left to the underlaying ontology.

- Based on the same ontology, during search, sets of keywords could be examined to "identify" the items most probably meant. The same approach could be applied by the peer to peer network to exchange item data sets.

- Finally, the reorganization would keep the ontology graph lightweight, therefore ease the fostering. Also, the auto-detection of implications would support users in keeping their tags clear and definite. That could reduce blur in tag choose, thus increase precision in search/search results.

Updates: none so far